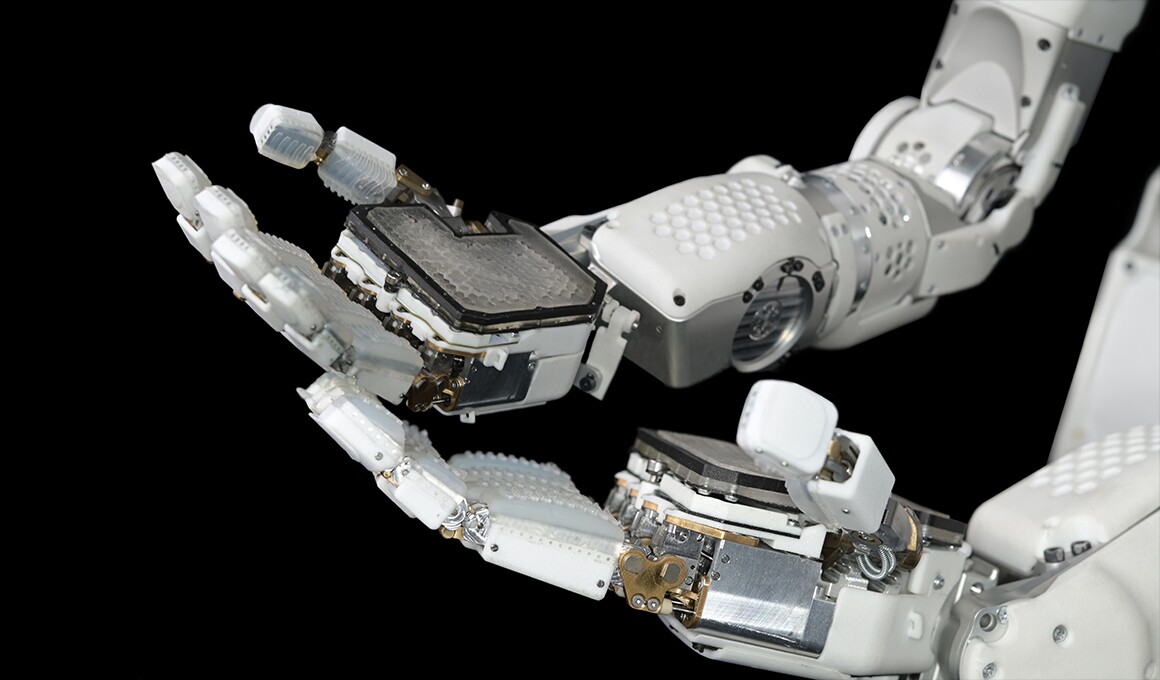

Sanctuary AI is among the world’s main humanoid robotics corporations. Its Phoenix robotic, now in its seventh technology, has dropped our jaws a number of occasions in the previous couple of months alone, demonstrating a exceptional tempo of studying and a fluidity and confidence of autonomous movement that exhibits simply how human-like these machines have gotten.

Take a look at the earlier model of Phoenix within the video beneath – its micro-hydraulic actuation system provides it a stage of energy, smoothness and fast precision in contrast to the rest we have seen thus far.

Powered by Carbon, Phoenix is now autonomously finishing easy duties at human-equivalent velocity. This is a crucial step on the journey to full autonomy. Phoenix is exclusive amongst humanoids in its velocity, precision, and energy, all crucial for industrial functions. pic.twitter.com/bYlsKBYw3i

— Geordie Rose (@realgeordierose) February 28, 2024

Gildert has spent the final six years with Sanctuary on the bleeding fringe of embodied AI and humanoid robotics. It is a unprecedented place to be in at this level; prodigious quantities of cash have began flowing into the sector as buyers understand simply how shut a general-purpose robotic is perhaps, how massively transformative it may very well be for society, and the near-unlimited money and energy these items might generate in the event that they do what it says on the tin.

And but, having been by way of the robust early startup days, she’s leaving – simply because the gravy prepare is rolling into the station.

“It’s with blended feelings,” writes CEO Geordie Rose in an open letter to the Sanctuary AI crew, “that we announce that our co-founder and CTO Suzanne has made the tough choice to maneuver on from Sanctuary. She helped pioneer our technological strategy to AI in robotics and labored with Sanctuary since our inception in 2018.

“Suzanne is now turning her full time consideration to AI security, AI ethics, and robotic consciousness. We want her one of the best of success in her new endeavors and can go away it to her to share extra when the time’s proper. I do know she has each confidence within the know-how we’re creating, the folks we’ve assembled, and the corporate’s prospects for the long run.”

Gildert has made no secret of her curiosity in AI consciousness over time, as evidenced on this video from final 12 months, by which she speaks of designing robotic brains that may “expertise issues in the identical manner the human thoughts does.”

Step one to constructing Carbon (our AI working and management system) inside a general-purpose robotic, can be to first perceive how the human mind works.

Our Co-founder and CTO @suzannegildert explains that through the use of experiential studying strategies, Sanctuary AI is… pic.twitter.com/U4AfUl6uhX

— Sanctuary AI (@TheSanctuaryAI) December 1, 2023

Now, there have been sure management transitions right here at New Atlas as effectively – particularly, I’ve stepped as much as lead the Editorial crew, which I point out solely as an excuse for why we have not launched the next interview earlier. My dangerous!

However in all my 17 years at Gizmag/New Atlas, this stands out as some of the fascinating, vast ranging and fearless discussions I’ve had with a tech chief. For those who’ve bought an hour and 17 minutes, or a drive forward of you, I totally suggest testing the total interview beneath on YouTube.

Interview: Former CTO of Sanctuary AI on humanoids, consciousness, AGI, hype, security and extinction

We have additionally transcribed a good whack of our dialog beneath should you’d choose to scan some textual content. A second whack will comply with, offered I get the time – however the entire thing’s within the video both manner! Get pleasure from!

On the potential for consciousness in embodied AI robots

Loz: What is the world that you just’re working to result in?

Suzanne Gildert: Good query! I’ve all the time been type of obsessive about the thoughts and the way it works. And I believe that each time we have added extra minds to our world, we have had extra discoveries made and extra developments made in know-how and civilization.

So I believe having extra intelligence on this planet on the whole, extra thoughts, extra consciousness, extra consciousness is one thing that I believe is nice for the world on the whole, I suppose that is simply my philosophical view.

So clearly, you’ll be able to create new human minds or animal minds, but in addition, can we create AI minds to assist populate not simply the world with extra intelligence and functionality, however the different planets and stars? I believe Max Tegmark mentioned one thing like we must always try to fill the universe with consciousness, which is, I believe, a type of grand and fascinating aim.

Sanctuary AI

This concept of AGI, and the way in which we’re getting there in the meanwhile by way of language fashions like GPT, and embodied intelligence in robotics like what you guys are doing… Is there a consciousness on the finish of this?

That is a extremely fascinating query, as a result of I type of modified my view on this lately. So it is fascinating to get requested about this as my view on it shifts.

I was of the opinion that consciousness is simply one thing that may emerge when your AI system was good sufficient, otherwise you had sufficient intelligence and the factor began passing the Turing take a look at, and it began behaving like an individual… It might simply robotically be aware.

However I am unsure I imagine that anymore. As a result of we do not actually know what consciousness is. And the extra time you spend with robots operating these neural nets, and operating stuff on GPUs, it is type of arduous to start out serious about that factor truly having a subjective expertise.

We run GPUs and packages on our laptops and computer systems on a regular basis. And we do not assume they’re aware. So what’s completely different about this factor?

It takes you into spooky territory.

It is fascinating. The stuff we, and different folks on this area, do shouldn’t be solely hardcore science and machine studying, and robotics and mechanical engineering, but it surely additionally touches on a few of these actually fascinating philosophical and deep matters that I believe everybody cares about.

It is the place the science begins to expire of explanations. However sure, the concept of spreading AI out by way of the cosmos… They appear extra more likely to get to different stars than we do. You type of want there was a humanoid on board Voyager.

Completely. Yeah, I believe it is one factor to ship, type of dumb matter on the market into area, which is type of cool, like probes and issues, sensors, possibly even AIs, however then to ship one thing that is type of like us, that is sentient and conscious and has an expertise of the world. I believe it is a very completely different matter. And I am rather more within the second.

Sanctuary AI

On what to anticipate within the subsequent decade

It is fascinating. The best way synthetic intelligence is being constructed, it isn’t precisely us, but it surely’s of us. It is skilled utilizing our output, which isn’t the identical as our expertise. It has one of the best and the worst of humanity inside it, but it surely’s additionally a completely completely different factor, these black packing containers, Pandora’s packing containers with little funnels of communication and interplay with the actual world.

Within the case of humanoids, that’ll be by way of a bodily physique and verbal and wi-fi communication; language fashions and conduct fashions. The place does that take us within the subsequent 10 years?

I believe we’ll see lots of what appears like very incremental progress at the beginning, then it is going to type of explode. I believe anybody who’s been following the progress of language fashions, during the last 10 years will attest to this.

10 years in the past, we have been taking part in with language fashions they usually might generate one thing on the extent of a nursery rhyme. And it went on like that for a very long time, folks did not assume it will get past that stage. However then with web scale information, it simply abruptly exploded, it went exponential. I believe we’ll see the identical factor with robotic conduct fashions.

So what we’ll see is these actually early little constructing blocks of motion and movement being automated, after which changing into commonplace. Like, a robotic can transfer a block, stack a block, like possibly decide one thing up, press a button, however It is type of nonetheless ‘researchy.’

However then sooner or later, I believe it goes past that. And it’ll, it is going to occur very radically and really quickly, and it’ll abruptly explode into robots with the ability to do all the pieces, seemingly out of nowhere. However should you truly observe it, it is one in all these predictable tendencies, simply with the dimensions of knowledge.

On Humanoid robotic hype ranges

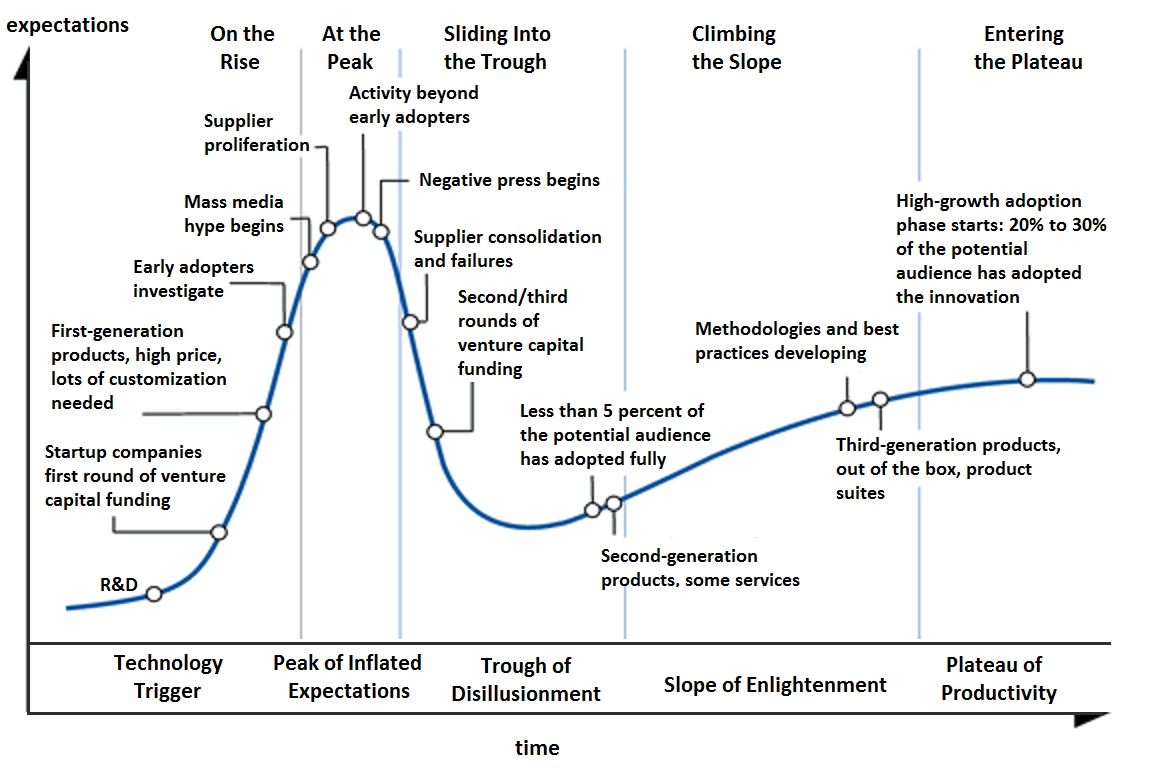

The place do humanoids sit on the outdated Gartner Hype Cycle, do you assume? Final time I spoke to Brett Adcock at Determine, he shocked me by saying he would not assume that cycle will apply to those issues.

I do assume humanoids are type of hyped in the meanwhile. So I truly assume we’re type of near that peak of inflated expectations proper now, I truly do assume there could also be a trough of disillusionment that we fall into. However I additionally assume we are going to most likely climb out of it fairly rapidly. So it most likely will not be the lengthy, gradual climb like what we’re seeing with VR, for instance.

However I do nonetheless assume there’s some time earlier than these items take off fully. And the explanation for that’s the scale of the info you want, to essentially make these fashions run in a general-purpose mode.

With massive language fashions, information was type of already accessible, as a result of we had all of the textual content on the web. Whereas with humanoid, general-purpose robots, the info shouldn’t be there. We’ll have some actually fascinating outcomes on some easy duties, easy constructing blocks of movement, however then it will not go wherever till we radically upscale the info to be… I do not know, billions of coaching examples, if no more.

So I believe that by that time, there shall be a type of a trough of ‘oh, this factor was alleged to be doing all the pieces in a few years.’ And it is simply because we have not but collected the info. So we are going to get there in the long run. However I believe folks could also be anticipating an excessive amount of too quickly.

I should not be saying this, as a result of we’re, like, constructing this know-how, but it surely’s simply the reality.

It is good to set reasonable expectations, although; Like, they’re going to be doing very, very fundamental duties after they first hit the workforce.

Yeah. Like, should you’re attempting to construct a basic goal intelligence, it’s a must to have seen coaching examples from virtually something an individual can do. Individuals say, ‘oh, it will probably’t be that dangerous, by the point you are 10, you’ll be able to mainly manipulate type of something on this planet, any machine or any objects, issues like that. We can’t take that lengthy to get that with coaching days.’

However what we neglect is our mind was already pre-evolved. Numerous that equipment is already baked in once we’re born, so we did not study all the pieces from scratch, like an AI algorithm – we’ve billions of years of evolution as effectively. You must issue that in.

I believe the quantity of knowledge wanted for a basic goal AI in a humanoid robotic that is aware of all the pieces that we all know… It should be like evolutionary timescale quantities of knowledge. I am making it sound worse than it’s, as a result of the extra robots you may get on the market, the extra information you’ll be able to accumulate.

And the higher they get, the extra robots you need, and it is type of a virtuous cycle as soon as it will get going. However I believe there’s going to be a great few years extra earlier than that cycle actually begins turning.

Sanctuary AI Unveils the Subsequent Era of AI Robotics

On embodied AIs as robotic infants

I am attempting to assume what that information gathering course of may seem like. You guys at Sanctuary are working with teleoperation in the meanwhile. You put on some type of swimsuit and goggles, you see what the robotic sees, and also you management its arms and physique, and also you do the duty.

It learns what the duty is, after which goes away and creates a simulated setting the place it will probably attempt that job a thousand, or one million occasions, make errors, and determine methods to do it autonomously. Does this evolutionary-scale information gathering undertaking get to a degree the place they’ll simply watch people doing issues, or will or not it’s teleoperation the entire manner?

I believe the best option to do it’s the first one you talked about, the place you are truly coaching a number of completely different foundational fashions. What we’re attempting to do at Sanctuary is study the essential atomic type of constituents of movement, should you like. So the essential methods by which the physique and the arms transfer so as to work together with objects.

I believe as soon as you have bought that, although, you have type of created this structure that is somewhat bit just like the motor reminiscence and the cerebellum in our mind. The half that turns mind indicators into physique indicators.

I believe as soon as you have bought that, you’ll be able to then hook in an entire bunch of different fashions that come from issues like studying, from video demonstration, hooking in language fashions, as effectively. You’ll be able to leverage lots of different sorts of information on the market that are not pure teleoperation.

However we imagine strongly that it is advisable to get that foundational constructing block in place, of getting it perceive the essential sorts of actions that human-like our bodies do, and the way these actions coordinate. Hand-eye coordination, issues like that. So that is what we’re targeted on.

Now, you’ll be able to consider it as type of like a six month outdated child, studying methods to transfer its physique on this planet, like a child in a stroller, and it is bought some toys in entrance of it. It is simply type of studying like, the place are they in bodily area? How do I attain out and seize one? What occurs if I contact it with one finger versus two fingers? Can I pull it in the direction of me? These type of basic items that infants simply innately study.

I believe it is like the purpose we’re at with these robots proper now. And it sounds very fundamental. However it’s these constructing blocks that then are used to construct up all the pieces we do later in life and on this planet of labor. We have to study these foundations first.

Eminent .@DavidChalmers42 on consciousness: “It’s inconceivable for me to be imagine [it] is an phantasm…possibly it truly protects for us to imagine that consciousness is an phantasm. It’s all a part of the evolutionary phantasm. In order that’s a part of the appeal.” .@brainyday pic.twitter.com/YWzuB7aVh8

— Suzanne Gildert (@suzannegildert) April 28, 2024

On methods to cease scallywags from ‘jailbreaking’ humanoids the way in which they do with LLMs

Anytime that there is a new GPT or Gemini or no matter will get launched, the very first thing folks do is attempt to break the guardrails. They attempt to get it to say impolite phrases, they try to get it to do all of the issues it isn’t alleged to do. They’ll do the identical with humanoid robots.

However the equal with an embodied robotic… It may very well be type of tough. Do you guys have a plan for that type of factor? As a result of it appears actually, actually arduous. We have had these language fashions now out on this planet getting performed with by cheeky monkeys for for a very long time, and there are nonetheless folks discovering methods to get them to do issues they are not alleged to on a regular basis. How on earth do you set safeguards round a bodily robotic?

That is only a actually good query. I do not assume anybody’s ever requested me that query earlier than. That is cool. I like this query. So yeah, you are completely proper. Like one of many causes that enormous language fashions have this failure mode is as a result of they’re largely skilled finish to finish. So you would simply ship in no matter textual content you need, you get a solution again.

For those who skilled robots finish to finish on this manner, you had billions of teleoperation examples, and the verbal enter was coming in and motion was popping out and also you simply skilled one large mannequin… At that time, you would say something to the robotic – you already know, smash the home windows on all these vehicles on the road. And the mannequin, if it was actually a basic AI, would know precisely what that meant. And it will presumably do it if that had been within the coaching set.

So I believe there are two methods you’ll be able to keep away from this being an issue. One is, you by no means put information within the coaching set that may have it exhibit the type of behaviors that you just would not need. So the hope is that if you can also make the coaching information of the sort that is moral and ethical… And clearly, that is a subjective query as effectively. However no matter you set into coaching information is what it will discover ways to do on this planet.

So possibly not serious about actually like should you requested it to smash a automotive window, it is simply going to do… no matter it has been proven is acceptable for an individual to do in that state of affairs. In order that’s type of a method of getting round it.

Simply to take the satan’s advocate half… For those who’re gonna join it to exterior language fashions, one factor that language fashions are actually, actually good at doing is breaking down an instruction into steps. And that’ll be how language and conduct fashions work together; you may give the robotic an instruction, and the LLM will create a step-by-step option to make the conduct mannequin perceive what it must do.

So, to my thoughts – and I am purely spitballing right here, so forgive me – however in that case it might be like, I do not know methods to smash one thing. I’ve by no means been skilled on methods to smash one thing. And a compromised LLM would be capable of inform it. Decide up that hammer. Go over right here. Fake there is a nail on the window… Possibly the language mannequin is the way in which by way of which a bodily robotic is perhaps jailbroken.

It kinda jogs my memory of the film Chappie, he will not shoot an individual as a result of he is aware of that is dangerous. However the man says one thing like ‘should you stab somebody, they simply fall asleep.’ So yeah, there are these fascinating tropes in sci-fi which might be performed round somewhat bit with a few of these concepts.

Yeah, I believe it is an open query, how can we cease it from simply breaking down a plan into models that themselves have by no means been seen to be morally good or dangerous within the coaching information? I imply, should you take an instance of, like, cooking, so within the kitchen, you usually minimize issues up with a knife.

So a robotic would discover ways to do this. That is a type of atomic motion that might then technically be utilized in a in a basic manner. So I believe it is a very fascinating open query as we transfer ahead.

Suzanne Gildert

I believe within the quick time period, individuals are going to get round that is by limiting the type of language inputs that get despatched into the robotic. So basically, you are attempting to constrain the generality.

So the robotic can use basic intelligence, however it will probably solely do very particular duties with it, should you see what I imply? A robotic shall be deployed right into a buyer state of affairs, say it has to inventory cabinets in a retail setting. So possibly at that time, it doesn’t matter what you say to the robotic, it is going to solely act if it hears sure instructions are about issues that it is alleged to be doing in its work setting.

So if I mentioned to the robotic, take all of the issues off the shelf and throw them on the ground, it would not do this. As a result of the language mannequin would type of reject that. It might solely settle for issues that sound like, you already know, put that on the shelf correctly…

I do not need to say that there is a there is a stable reply to this query. One of many issues that we will need to assume very fastidiously about over the following 5 to 10 years as these basic fashions begin to come on-line is how can we stop them from being… I do not need to say hacked, however misused, or folks looking for loopholes in them?

I truly assume although, these loopholes, so long as we keep away from them being catastrophic, could be very illuminating. As a result of should you mentioned one thing to a robotic, and it did one thing that an individual would by no means do, then there’s an argument that that is not likely a real human-like intelligence. So there’s one thing flawed with the way in which you are modeling intelligence there.

So to me, that is an fascinating suggestions sign of the way you may need to change the mannequin to assault that loophole, or that drawback you present in it. However that is like I am all the time saying once I speak to folks now, this is the reason I believe robots are going to be in analysis labs, in very constrained areas when they’re deployed, initially.

As a result of I believe there shall be issues like this, which might be found over time. Any general-purpose know-how, you’ll be able to by no means know precisely what it will do. So I believe what we’ve to do is simply deploy these items very slowly, very fastidiously. Do not simply go placing them in any state of affairs straightaway. Maintain them within the lab, do as a lot testing as you’ll be able to, after which deploy them very fastidiously into positions possibly the place they are not initially in touch with folks, or they are not in conditions the place issues might go terribly flawed.

Let’s begin with quite simple issues that we would allow them to do. Once more, a bit like kids. For those who have been, you already know, giving your 5 12 months outdated somewhat chore to take action they may earn some pocket cash, you’d give them one thing that was fairly constrained, and also you’re fairly positive nothing’s gonna go terribly flawed. You give them somewhat little bit of independence, see how they do, and type of go from there.

I am all the time speaking about this: nurturing or mentioning AIs like we convey up kids. Generally it’s a must to give them somewhat little bit of independence and belief them a bit, transfer that envelope ahead. After which if one thing dangerous occurs… Nicely, hopefully it isn’t too catastrophic, since you solely gave them somewhat little bit of independence. After which we’ll begin understanding how and the place these fashions fail.

Do you might have children of your individual?

I do not, no.

As a result of that may be an enchanting course of, mentioning children whilst you’re mentioning toddler humanoids… Anyway, one factor that offers me hope is that you do not typically see GPT or Gemini being naughty until folks have actually, actually tried to make that occur. Individuals need to work arduous to idiot them.

I like this concept that you just’re type of constructing a morality into them. The concept there are specific issues people and humanoids alike simply will not do. In fact, the difficulty with that’s that there are specific issues sure people will not do… You’ll be able to’t precisely decide the persona of a mannequin that is been skilled on the entire of humanity. We comprise multitudes, and there is lots of variation in terms of morality.

On multi-agent supervision and human-in-the-loop

One other a part of it’s this type of semi-autonomous mode you can have, the place you might have human oversight at a excessive stage of abstraction. So an individual can take over at any level. So you might have an AI system that oversees a fleet of robots, and detects that one thing completely different is occurring, or one thing doubtlessly harmful is perhaps occurring, and you’ll truly drop again to having a human teleoperator within the loop.

We use that for edge case dealing with as a result of when our robotic deploys, we wish the robotic to be gathering information on the job and truly studying on the job. So it is vital for us that we are able to swap the mode of the robotic between teleoperation and autonomous mode on the fly. That is perhaps one other manner of serving to keep security, having a number of operators within the loop watching all the pieces whereas the robotic’s beginning out its autonomous journey in life.

One other manner is to combine other forms of reasoning techniques. Moderately than one thing like a big language mannequin – which is a black field, you actually do not know the way it’s working – some symbolic logic and reasoning techniques from the 60s by way of to the 80s and 90s do mean you can hint how a choice is made. I believe there’s nonetheless lots of good concepts there.

However combining these applied sciences shouldn’t be simple… It would be cool to have virtually like a Mr. Spock – this analytical, mathematical AI that is calculating the logical penalties of an motion, and that may step in and cease the neural web that is simply type of discovered from no matter it has been proven.

Get pleasure from all the interview within the video beneath – or keep tuned for Suzanne Gildert’s ideas on post-labor societies, extinction-level threats, the top of human usefulness, how governments must be making ready for the age of embodied AI, and the way she’d be proud if these machines managed to colonize the celebs and unfold a brand new kind of consciousness.

Interview: Former CTO of Sanctuary AI on humanoids, consciousness, AGI, hype, security and extinction

Supply: Sanctuary AI