I have been repeatedly following the pc imaginative and prescient (CV) and picture synthesis analysis scene at Arxiv and elsewhere for round 5 years, so tendencies change into evident over time, and so they shift in new instructions yearly.

Subsequently as 2024 attracts to a detailed, I assumed it applicable to try some new or evolving traits in Arxiv submissions within the Laptop Imaginative and prescient and Sample Recognition part. These observations, although knowledgeable by a whole bunch of hours finding out the scene, are strictly anecdata.

The Ongoing Rise of East Asia

By the top of 2023, I had observed that almost all of the literature within the ‘voice synthesis’ class was popping out of China and different areas in east Asia. On the finish of 2024, I’ve to watch (anecdotally) that this now applies additionally to the picture and video synthesis analysis scene.

This doesn’t imply that China and adjoining international locations are essentially all the time outputting the most effective work (certainly, there’s some proof on the contrary); nor does it take account of the excessive chance in China (as within the west) that among the most attention-grabbing and highly effective new growing techniques are proprietary, and excluded from the analysis literature.

But it surely does counsel that east Asia is thrashing the west by quantity, on this regard. What that is value is determined by the extent to which you imagine within the viability of Edison-style persistence, which normally proves ineffective within the face of intractable obstacles.

There are many such roadblocks in generative AI, and it’s not simple to know which could be solved by addressing present architectures, and which is able to should be reconsidered from zero.

Although researchers from east Asia appear to be producing a higher variety of pc imaginative and prescient papers, I’ve observed a rise within the frequency of ‘Frankenstein’-style tasks – initiatives that represent a melding of prior works, whereas including restricted architectural novelty (or probably only a completely different kind of knowledge).

This yr a far greater variety of east Asian (primarily Chinese language or Chinese language-involved collaborations) entries appeared to be quota-driven relatively than merit-driven, considerably growing the signal-to-noise ratio in an already over-subscribed discipline.

On the similar time, a higher variety of east Asian papers have additionally engaged my consideration and admiration in 2024. So if that is all a numbers recreation, it is not failing – however neither is it low-cost.

Rising Quantity of Submissions

The quantity of papers, throughout all originating international locations, has evidently elevated in 2024.

The most well-liked publication day shifts all year long; in the meanwhile it’s Tuesday, when the variety of submissions to the Laptop Imaginative and prescient and Sample Recognition part is commonly round 300-350 in a single day, within the ‘peak’ intervals (Might-August and October-December, i.e., convention season and ‘annual quota deadline’ season, respectively).

Past my very own expertise, Arxiv itself stories a file variety of submissions in October of 2024, with 6000 whole new submissions, and the Laptop Imaginative and prescient part the second-most submitted part after Machine Studying.

Nevertheless, for the reason that Machine Studying part at Arxiv is commonly used as an ‘further’ or aggregated super-category, this argues for Laptop Imaginative and prescient and Sample Recognition truly being the most-submitted Arxiv class.

Arxiv’s personal statistics definitely depict pc science because the clear chief in submissions:

Laptop Science (CS) dominates submission statistics at Arxiv during the last 5 years. Supply: https://data.arxiv.org/about/stories/submission_category_by_year.html

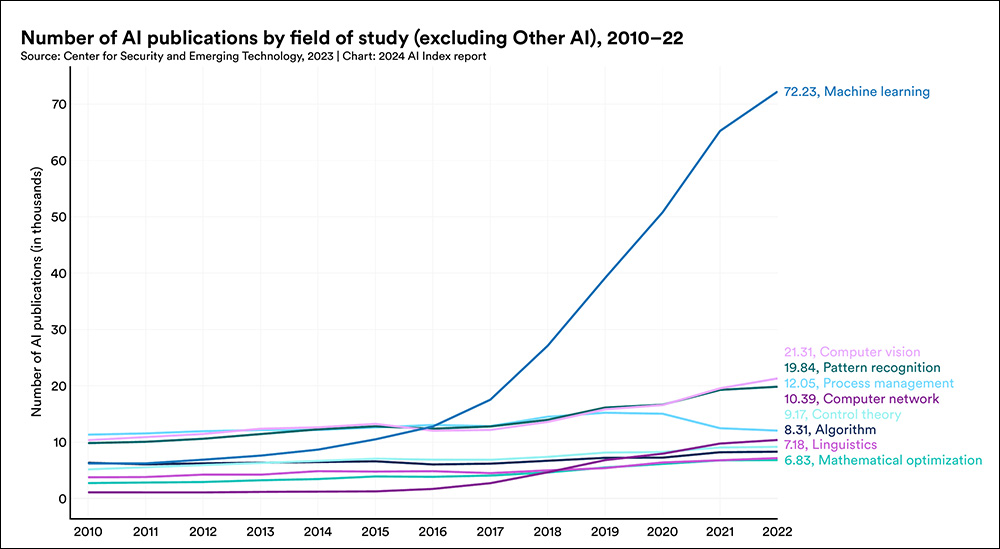

Stanford College’s 2024 AI Index, although not in a position to report on most up-to-date statistics but, additionally emphasizes the notable rise in submissions of educational papers round machine studying in recent times:

With figures not accessible for 2024, Stanford’s report nonetheless dramatically reveals the rise of submission volumes for machine studying papers. Supply: https://aiindex.stanford.edu/wp-content/uploads/2024/04/HAI_AI-Index-Report-2024_Chapter1.pdf

Diffusion>Mesh Frameworks Proliferate

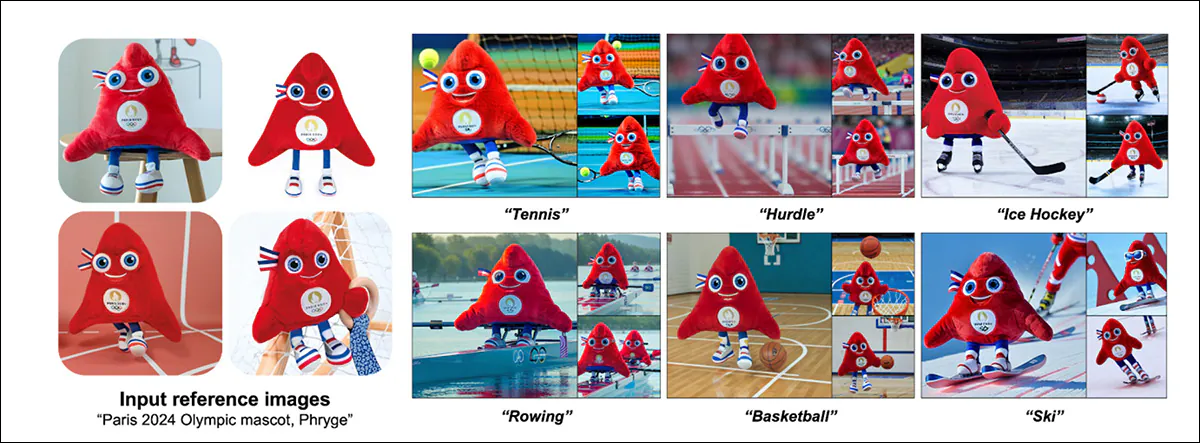

One different clear pattern that emerged for me was a big upswing in papers that cope with leveraging Latent Diffusion Fashions (LDMs) as mills of mesh-based, ‘conventional’ CGI fashions.

Initiatives of this kind embrace Tencent’s InstantMesh3D, 3Dtopia, Diffusion2, V3D, MVEdit, and GIMDiffusion, amongst a plenitude of comparable choices.

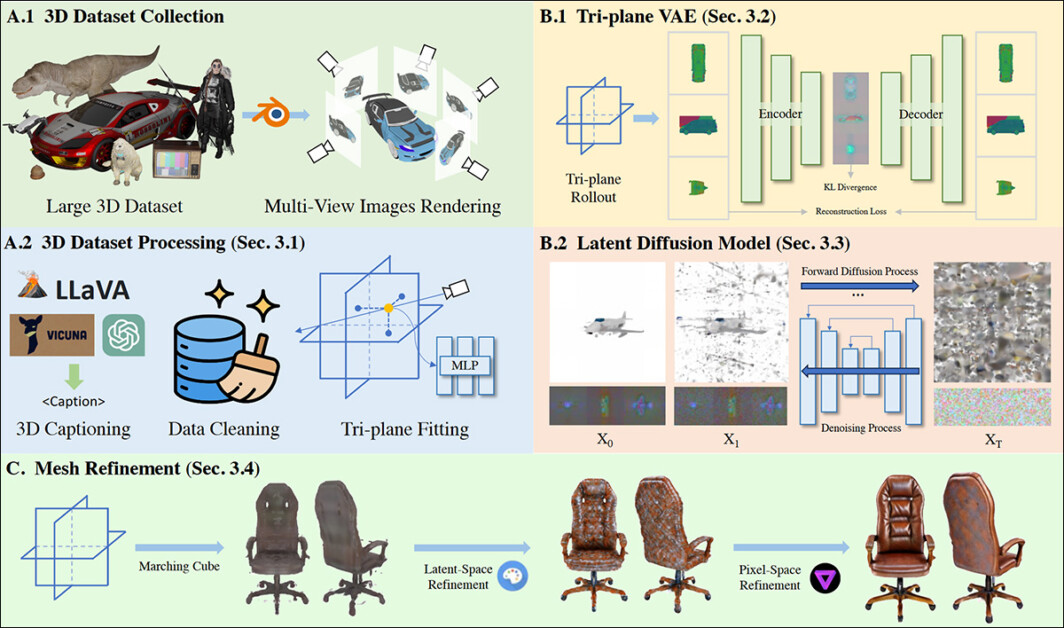

Mesh technology and refinement through a Diffusion-based course of in 3Dtopia. Supply: https://arxiv.org/pdf/2403.02234

This emergent analysis strand might be taken as a tacit concession to the continuing intractability of generative techniques akin to diffusion fashions, which solely two years had been being touted as a possible substitute for all of the techniques that diffusion>mesh fashions are actually in search of to populate; relegating diffusion to the function of a instrument in applied sciences and workflows that date again thirty or extra years.

Stability.ai, originators of the open supply Secure Diffusion mannequin, have simply launched Secure Zero123, which may, amongst different issues, use a Neural Radiance Fields (NeRF) interpretation of an AI-generated picture as a bridge to create an specific, mesh-based CGI mannequin that can be utilized in CGI arenas akin to Unity, in video-games, augmented actuality, and in different platforms that require specific 3D coordinates, versus the implicit (hidden) coordinates of steady capabilities.

Click on to play. Pictures generated in Secure Diffusion could be transformed to rational CGI meshes. Right here we see the results of a picture>CGI workflow utilizing Secure Zero 123. Supply: https://www.youtube.com/watch?v=RxsssDD48Xc

3D Semantics

The generative AI house makes a distinction between 2D and 3D techniques implementations of imaginative and prescient and generative techniques. For example, facial landmarking frameworks, although representing 3D objects (faces) in all circumstances, don’t all essentially calculate addressable 3D coordinates.

The favored FANAlign system, extensively utilized in 2017-era deepfake architectures (amongst others), can accommodate each these approaches:

Above, 2D landmarks are generated primarily based solely on acknowledged face lineaments and options. Under, they’re rationalized into 3D X/Y/Z house. Supply: https://github.com/1adrianb/face-alignment

So, simply as ‘deepfake’ has change into an ambiguous and hijacked time period, ‘3D’ has likewise change into a complicated time period in pc imaginative and prescient analysis.

For shoppers, it has sometimes signified stereo-enabled media (akin to motion pictures the place the viewer has to put on particular glasses); for visible results practitioners and modelers, it supplies the excellence between 2D paintings (akin to conceptual sketches) and mesh-based fashions that may be manipulated in a ‘3D program’ like Maya or Cinema4D.

However in pc imaginative and prescient, it merely signifies that a Cartesian coordinate system exists someplace within the latent house of the mannequin – not that it could possibly essentially be addressed or straight manipulated by a person; at the least, not with out third-party interpretative CGI-based techniques akin to 3DMM or FLAME.

Subsequently the notion of diffusion>3D is inexact; not solely can any kind of picture (together with an actual picture) be used as enter to supply a generative CGI mannequin, however the much less ambiguous time period ‘mesh’ is extra applicable.

Nevertheless, to compound the anomaly, diffusion is wanted to interpret the supply picture right into a mesh, within the majority of rising tasks. So a greater description is perhaps image-to-mesh, whereas picture>diffusion>mesh is an much more correct description.

However that is a tough promote at a board assembly, or in a publicity launch designed to interact traders.

Proof of Architectural Stalemates

Even in comparison with 2023, the final 12 months’ crop of papers reveals a rising desperation round eradicating the exhausting sensible limits on diffusion-based technology.

The important thing stumbling block stays the technology of narratively and temporally constant video, and sustaining a constant look of characters and objects – not solely throughout completely different video clips, however even throughout the brief runtime of a single generated video clip.

The final epochal innovation in diffusion-based synthesis was the creation of LoRA in 2022. Whereas newer techniques akin to Flux have improved on among the outlier issues, akin to Secure Diffusion’s former incapacity to breed textual content content material inside a generated picture, and total picture high quality has improved, nearly all of papers I studied in 2024 had been primarily simply transferring the meals round on the plate.

These stalemates have occurred earlier than, with Generative Adversarial Networks (GANs) and with Neural Radiance Fields (NeRF), each of which didn’t reside as much as their obvious preliminary potential – and each of that are more and more being leveraged in additional standard techniques (akin to using NeRF in Secure Zero 123, see above). This additionally seems to be taking place with diffusion fashions.

Gaussian Splatting Analysis Pivots

It appeared on the finish of 2023 that the rasterization technique 3D Gaussian Splatting (3DGS), which debuted as a medical imaging method within the early Nineteen Nineties, was set to all of a sudden overtake autoencoder-based techniques of human picture synthesis challenges (akin to facial simulation and recreation, in addition to id switch).

The 2023 ASH paper promised full-body 3DGS people, whereas Gaussian Avatars supplied massively improved element (in comparison with autoencoder and different competing strategies), along with spectacular cross-reenactment.

This yr, nonetheless, has been comparatively brief on any such breakthrough moments for 3DGS human synthesis; many of the papers that tackled the issue had been both spinoff of the above works, or didn’t exceed their capabilities.

As a substitute, the emphasis on 3DGS has been in enhancing its basic architectural feasibility, resulting in a rash of papers that provide improved 3DGS exterior environments. Specific consideration has been paid to Simultaneous Localization and Mapping (SLAM) 3DGS approaches, in tasks akin to Gaussian Splatting SLAM, Splat-SLAM, Gaussian-SLAM, DROID-Splat, amongst many others.

These tasks that did try and proceed or prolong splat-based human synthesis included MIGS, GEM, EVA, OccFusion, FAGhead, HumanSplat, GGHead, HGM, and Topo4D. Although there are others apart from, none of those outings matched the preliminary affect of the papers that emerged in late 2023.

The ‘Weinstein Period’ of Take a look at Samples Is in (Sluggish) Decline

Analysis from south east Asia normally (and China specifically) usually options take a look at examples which might be problematic to republish in a assessment article, as a result of they function materials that may be a little ‘spicy’.

Whether or not it is because analysis scientists in that a part of the world are in search of to garner consideration for his or her output is up for debate; however for the final 18 months, an growing variety of papers round generative AI (picture and/or video) have defaulted to utilizing younger and scantily-clad girls and ladies in mission examples. Borderline NSFW examples of this embrace UniAnimate, ControlNext, and even very ‘dry’ papers akin to Evaluating Movement Consistency by Fréchet Video Movement Distance (FVMD).

This follows the overall tendencies of subreddits and different communities which have gathered round Latent Diffusion Fashions (LDMs), the place Rule 34 stays very a lot in proof.

Celeb Face-Off

This kind of inappropriate instance overlaps with the rising recognition that AI processes mustn’t arbitrarily exploit superstar likenesses – significantly in research that uncritically use examples that includes engaging celebrities, usually feminine, and place them in questionable contexts.

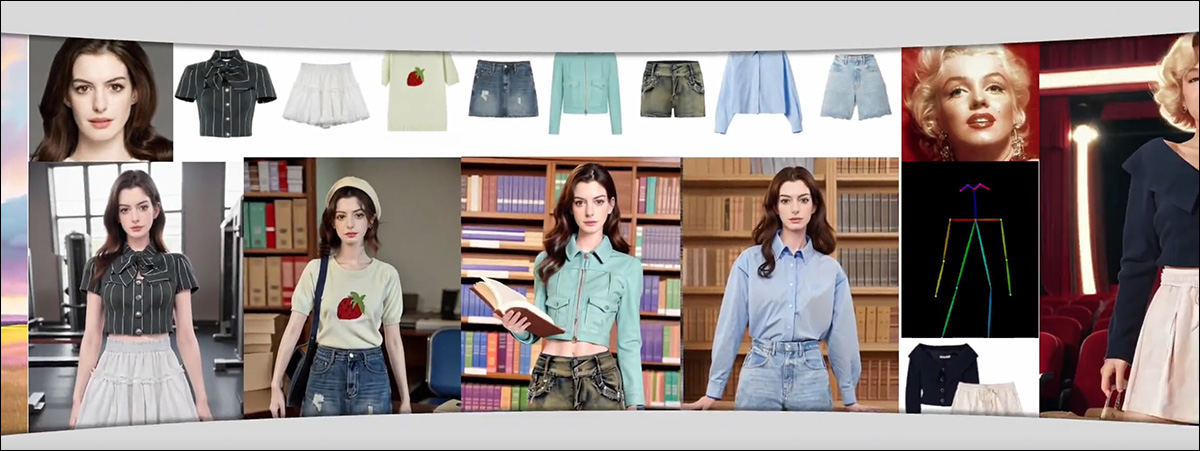

One instance is AnyDressing, which, apart from that includes very younger anime-style feminine characters, additionally liberally makes use of the identities of basic celebrities akin to Marilyn Monroe, and present ones akin to Ann Hathaway (who has denounced this sort of utilization fairly vocally).

Arbitrary use of present and ‘basic’ celebrities remains to be pretty widespread in papers from south east Asia, although the follow is barely on the decline. Supply: https://crayon-shinchan.github.io/AnyDressing/

In western papers, this specific follow has been notably in decline all through 2024, led by the bigger releases from FAANG and different high-level analysis our bodies akin to OpenAI. Critically conscious of the potential for future litigation, these main company gamers appear more and more unwilling to symbolize even fictional photorealistic individuals.

Although the techniques they’re creating (akin to Imagen and Veo2) are clearly able to such output, examples from western generative AI tasks now pattern in the direction of ‘cute’, Disneyfied and intensely ‘protected’ pictures and movies.

Regardless of vaunting Imagen’s capability to create ‘photorealistic’ output, the samples promoted by Google Analysis are sometimes fantastical, ‘household’ fare – photorealistic people are fastidiously averted, or minimal examples offered. Supply: https://imagen.analysis.google/

Face-Washing

Within the western CV literature, this disingenuous method is especially in proof for customization techniques – strategies that are able to creating constant likenesses of a specific individual throughout a number of examples (i.e., like LoRA and the older DreamBooth).

Examples embrace orthogonal visible embedding, LoRA-Composer, Google’s InstructBooth, and a large number extra.

Google’s InstructBooth turns the cuteness issue as much as 11, despite the fact that historical past means that customers are extra enthusiastic about creating photoreal people than furry or fluffy characters. Supply: https://websites.google.com/view/instructbooth

Nevertheless, the rise of the ‘cute instance’ is seen in different CV and synthesis analysis strands, in tasks akin to Comp4D, V3D, DesignEdit, UniEdit, FaceChain (which concedes to extra practical person expectations on its GitHub web page), and DPG-T2I, amongst many others.

The convenience with which such techniques (akin to LoRAs) could be created by residence customers with comparatively modest {hardware} has led to an explosion of freely-downloadable superstar fashions on the civit.ai area and group. Such illicit utilization stays attainable via the open sourcing of architectures akin to Secure Diffusion and Flux.

Although it’s usually attainable to punch via the protection options of generative text-to-image (T2I) and text-to-video (T2V) techniques to supply materials banned by a platform’s phrases of use, the hole between the restricted capabilities of the most effective techniques (akin to RunwayML and Sora), and the limitless capabilities of the merely performant techniques (akin to Secure Video Diffusion, CogVideo and native deployments of Hunyuan), shouldn’t be actually closing, as many imagine.

Slightly, these proprietary and open-source techniques, respectively, threaten to change into equally ineffective: costly and hyperscale T2V techniques might change into excessively hamstrung as a result of fears of litigation, whereas the dearth of licensing infrastructure and dataset oversight in open supply techniques might lock them completely out of the market as extra stringent rules take maintain.

First revealed Tuesday, December 24, 2024